- ✅ Benchmark-topping performance.

- ✅ Simple and extensible single C++11 header implementation.

- ✅ SIMD-optimized and user-defined metrics with JIT compilation.

- ✅ Variable dimensionality vectors for unique applications, including search over compressed data.

- ✅ Bitwise Tanimoto and Sorensen coefficients for Genomics and Chemistry applications.

- ✅ Hardware-agnostic

f16&i8- half-precision & quarter-precision support. - ✅ View large indexes from disk without loading into RAM.

- ✅ Space-efficient point-clouds with

uint40_t, accommodating 4B+ size. - ✅ Compatible with OpenMP and custom "executors", for fine-grained control over CPU utilization.

- ✅ Heterogeneous lookups, renaming/relabeling, and on-the-fly deletions.

- ✅ Semantic Search and Joins.

Comparison with FAISS

FAISS is a widely recognized standard for high-performance vector search engines. USearch and FAISS both employ the same HNSW algorithm, but they differ significantly in their design principles. USearch is compact and broadly compatible without sacrificing performance, with a primary focus on user-defined metrics and fewer dependencies.

| FAISS | USearch | |

|---|---|---|

| Implementation | 84 K SLOC in faiss/ | 3 K SLOC in usearch/ |

| Supported metrics | 9 fixed metrics | Any User-Defined metrics |

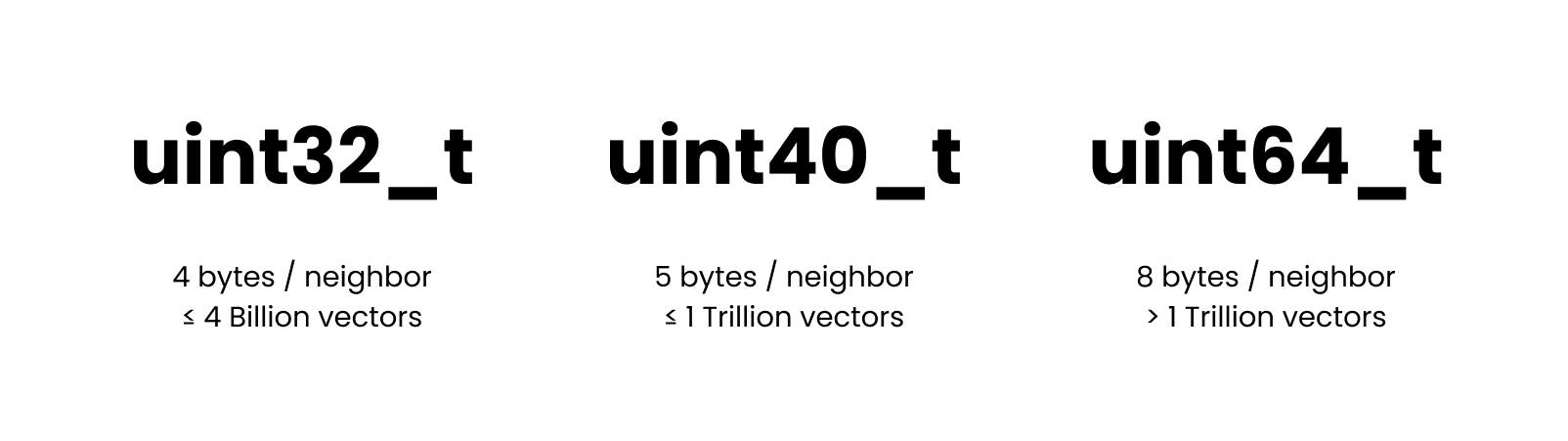

| Supported ID types | uint32_t, uint64_t | uint32_t, uint40_t, uint64_t |

| Dependencies | BLAS, OpenMP | None |

| Bindings | SWIG | Native |

| Acceleration | Learned Quantization | Downcasting |

Base functionality is identical to FAISS, and the interface must be familiar if you have ever investigated Approximate Nearest Neighbors search:

$ pip install usearch numpy

import numpy as np

from usearch.index import Index

index = Index(

ndim=3, # Define the number of dimensions in input vectors

metric='cos', # Choose 'l2sq', 'haversine' or other metric, default = 'ip'

dtype='f32', # Quantize to 'f16' or 'i8' if needed, default = 'f32'

connectivity=16, # Optional: How frequent should the connections in the graph be

expansion_add=128, # Optional: Control the recall of indexing

expansion_search=64, # Optional: Control the quality of search

)

vector = np.array([0.2, 0.6, 0.4])

index.add(42, vector)

matches: Matches = index.search(vector, 10)

assert len(index) == 1

assert len(matches) == 1

assert matches[0].key == 42

assert matches[0].distance <= 0.001

assert np.allclose(index[42], vector)

User-Defined Functions

While most vector search packages concentrate on just a couple of metrics - "Inner Product distance" and "Euclidean distance," USearch extends this list to include any user-defined metrics. This flexibility allows you to customize your search for a myriad of applications, from computing geo-spatial coordinates with the rare Haversine distance to creating custom metrics for composite embeddings from multiple AI models.

Unlike older approaches indexing high-dimensional spaces, like KD-Trees and Locality Sensitive Hashing, HNSW doesn't require vectors to be identical in length. They only have to be comparable. So you can apply it in obscure applications, like searching for similar sets or fuzzy text matching, using GZip as a distance function.

Read more about JIT and UDF in USearch Python SDK.

Memory Efficiency, Downcasting, and Quantization

Training a quantization model and dimension-reduction is a common approach to accelerate vector search. Those, however, are only sometimes reliable, can significantly affect the statistical properties of your data, and require regular adjustments if your distribution shifts.

Instead, we have focused on high-precision arithmetic over low-precision downcasted vectors.

The same index, and add and search operations will automatically down-cast or up-cast between f32_t, f16_t, f64_t, and i8_t representations, even if the hardware doesn't natively support it.

Continuing the topic of memory efficiency, we provide a uint40_t to allow collection with over 4B+ vectors without allocating 8 bytes for every neighbor reference in the proximity graph.

FAISS, f32 | USearch, f32 | USearch, f16 | USearch, i8 | |

|---|---|---|---|---|

| Batch Insert | 16 K/s | 73 K/s | 100 K/s | 104 K/s +550% |

| Batch Search | 82 K/s | 103 K/s | 113 K/s | 134 K/s +63% |

| Bulk Insert | 76 K/s | 105 K/s | 115 K/s | 202 K/s +165% |

| Bulk Search | 118 K/s | 174 K/s | 173 K/s | 304 K/s +157% |

| Recall @ 10 | 99% | 99.2% | 99.1% | 99.2% |

Dataset: 1M vectors sample of the Deep1B dataset. Hardware:

c7g.metalAWS instance with 64 cores and DDR5 memory. HNSW was configured with identical hyper-parameters: connectivityM=16, expansion @ constructionefConstruction=128, and expansion @ searchef=64. Batch size is 256. Both libraries were compiled for the target architecture. Jump to the Performance Tuning section to read about the effects of those hyper-parameters.

Disk-based Indexes

With USearch, you can serve indexes from external memory, enabling you to optimize your server choices for indexing speed and serving costs. This can result in 20x cost reduction on AWS and other public clouds.

index.save("index.usearch")

loaded_copy = index.load("index.usearch")

view = Index.restore("index.usearch", view=True)

other_view = Index(ndim=..., metric=CompiledMetric(...))

other_view.view("index.usearch")

Exact, Approximate, and Multi-Index Lookups

Approximate search methods, such as HNSW, are predominantly used when an exact brute-force search becomes too resource-intensive.

This typically occurs when you have millions of entries in a collection.

For smaller collections, we offer a more direct approach with the search method.

from usearch.index import search, MetricKind, Matches, BatchMatches

import numpy as np

# Generate 10'000 random vectors with 1024 dimensions

vectors = np.random.rand(10_000, 1024).astype(np.float32)

vector = np.random.rand(1024).astype(np.float32)

one_in_many: Matches = search(vectors, vector, 50, MetricKind.L2sq, exact=True)

many_in_many: BatchMatches = search(vectors, vectors, 50, MetricKind.L2sq, exact=True)

By passing the exact=True argument, the system bypasses indexing altogether and performs a brute-force search through the entire dataset using SIMD-optimized similarity metrics from SimSIMD.

When compared to FAISS's IndexFlatL2 in Google Colab, USearch may offer up to a 20x performance improvement:

faiss.IndexFlatL2: 55.3 ms.usearch.index.search: 2.54 ms.

For larger workloads targeting billions or even trillions of vectors, parallel multi-index lookups become invaluable. These lookups prevent the need to construct a single, massive index, allowing users to query multiple smaller ones instead.

from usearch.index import Indexes

multi_index = Indexes(

indexes: Iterable[usearch.index.Index] = [...],

paths: Iterable[os.PathLike] = [...],

view: bool = False,

threads: int = 0,

)

multi_index.search(...)

Joins, One-to-One, One-to-Many, and Many-to-Many Mappings

One of the big questions these days is how will AI change the world of databases and data management.

Most databases are still struggling to implement high-quality fuzzy search, and the only kind of joins they know are deterministic.

A join is different from searching for every entry, as it requires a one-to-one mapping, banning collisions among separate search results.

| Exact Search | Fuzzy Search | Semantic Search ? |

|---|---|---|

| Exact Join | Fuzzy Join ? | Semantic Join ?? |

Using USearch one can implement sub-quadratic complexity approximate, fuzzy, and semantic joins. This can come in handy in any fuzzy-matching tasks, common to Database Management Software.

men = Index(...)

women = Index(...)

pairs: dict = men.join(women, max_proposals=0, exact=False)

Read more in post: From Dating to Vector Search - "Stable Marriages" on a Planetary Scale 👩❤️👨

Functionality

By now, the core functionality is supported across all bindings. Broader functionality is ported per request.

| C++ 11 | Python 3 | C 99 | Java | JavaScript | Rust | GoLang | Swift | |

|---|---|---|---|---|---|---|---|---|

| Add, search | ✅ | ✅ | ✅ | ✅ | ✅ | ✅ | ✅ | ✅ |

| Save, load, view | ✅ | ✅ | ✅ | ✅ | ✅ | ✅ | ✅ | ✅ |

| User-defined metrics | ✅ | ✅ | ✅ | ❌ | ❌ | ❌ | ❌ | ❌ |

| Joins | ✅ | ✅ | ❌ | ❌ | ❌ | ❌ | ❌ | ❌ |

| Variable-length vectors | ✅ | ❌ | ❌ | ❌ | ❌ | ❌ | ❌ | ❌ |

| 4B+ capacities | ✅ | ❌ | ❌ | ❌ | ❌ | ❌ | ❌ | ❌ |

Application Examples

USearch + AI = Multi-Modal Semantic Search

AI has a growing number of applications, but one of the coolest classic ideas is to use it for Semantic Search. One can take an encoder model, like the multi-modal UForm, and a web-programming framework, like UCall, and build a text-to-image search platform in just 20 lines of Python.

import ucall

import uform

import usearch

import numpy as np

import PIL as pil

server = ucall.Server()

model = uform.get_model('unum-cloud/uform-vl-multilingual')

index = usearch.index.Index(ndim=256)

@server

def add(key: int, photo: pil.Image.Image):

image = model.preprocess_image(photo)

vector = model.encode_image(image).detach().numpy()

index.add(key, vector.flatten(), copy=True)

@server

def search(query: str) -> np.ndarray:

tokens = model.preprocess_text(query)

vector = model.encode_text(tokens).detach().numpy()

matches = index.search(vector.flatten(), 3)

return matches.keys

server.run()

We have pre-processed some commonly used datasets, cleaned the images, produced the vectors, and pre-built the index.

| Dataset | Modalities | Images | Download |

|---|---|---|---|

| Unsplash 25K | Images & Descriptions | 25 K | HuggingFace / Unum |

| Conceptual Captions 3M | Images & Descriptions | 3 M | HuggingFace / Unum |

| Arxiv 2M | Titles & Abstracts | 2 M | HuggingFace / Unum |

USearch + RDKit = Molecular Search

Comparing molecule graphs and searching for similar structures is expensive and slow. It can be seen as a special case of the NP-Complete Subgraph Isomorphism problem. Luckily, domain-specific approximate methods exist. The one commonly used in Chemistry, is to generate structures from SMILES, and later hash them into binary fingerprints. The latter are searchable with bitwise similarity metrics, like the Tanimoto coefficient. Below is an example using the RDKit package.

from usearch.index import Index, MetricKind

from rdkit import Chem

from rdkit.Chem import AllChem

import numpy as np

molecules = [Chem.MolFromSmiles('CCOC'), Chem.MolFromSmiles('CCO')]

encoder = AllChem.GetRDKitFPGenerator()

fingerprints = np.vstack([encoder.GetFingerprint(x) for x in molecules])

fingerprints = np.packbits(fingerprints, axis=1)

index = Index(ndim=2048, metric=MetricKind.Tanimoto)

keys = np.arange(len(molecules))

index.add(keys, fingerprints)

matches = index.search(fingerprints, 10)

Integrations

- GPT-Cache.

- LangChain.

- ClickHouse.

- Microsoft Semantic Kernel.

Citations

@software{Vardanian_USearch_2022,

doi = {10.5281/zenodo.7949416},

author = {Vardanian, Ash},

title = {{USearch by Unum Cloud}},

url = {https://github.com/unum-cloud/usearch},

version = {0.13.0},

year = {2022}

month = jun,

}